Claude Code sub-agents are great until they're not. I hit the wall at around 25 tool calls - the agent runs out of turns, loses context, and can't access hooks or MCP servers. For simple searches and code reviews, sub-agents work fine. For anything that requires real depth - multi-phase implementations, long debugging sessions, tasks that need your full system - they fall apart.

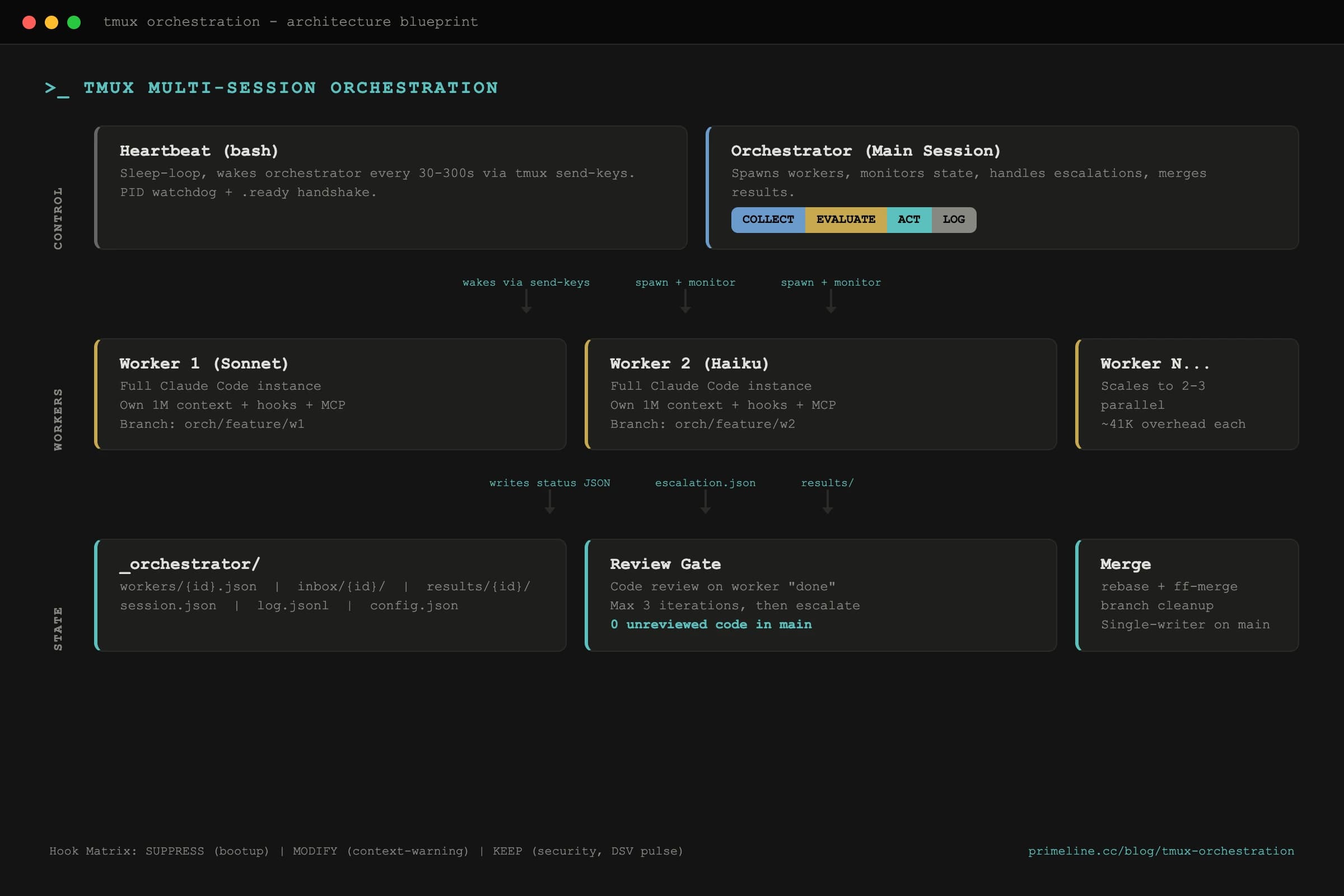

Claude Code tmux orchestration spawns full sessions as workers - each with its own 1M context, hooks, and MCP access. A bash heartbeat monitors them, a 4-step cycle (COLLECT-EVALUATE-ACT-LOG) coordinates work, and a review gate ensures zero unreviewed code reaches main.

I spent 3 weeks building a tmux orchestration layer that spawns full Claude Code sessions as workers. Each worker gets its own 1M context window, full hook access, MCP servers, compaction - everything the main session has. The orchestrator monitors them, enforces rules, and coordinates results through file-based state.

Want the foundational patterns first? The free 3-pattern guide covers memory, delegation, and knowledge graphs at concept level.

Why Do Claude Code Sub-Agents Hit Limits?

Sub-agents in Claude Code run inside your current session. They inherit a subset of tools, get a limited turn budget, and share your context window. That works for exploration, quick searches, and code reviews. But three constraints make them unusable for complex work:

Turn limits. Sub-agents max out around 25 tool calls. A multi-file refactoring that needs 50+ reads, writes, and test runs can't finish in one sub-agent pass. You end up chaining sub-agents manually, losing context between each handoff.

No hooks or MCP. Sub-agents don't trigger your hook system. No security checks, no context warnings, no auto-delegation. They also can't access MCP servers like Kairn for knowledge recall. They're running blind compared to your main session.

Shared context. Every sub-agent eats into your main session's context window. Spawn three research agents in parallel, and you've burned ~123K tokens on setup overhead alone - over 60% of a 200K window before any real work starts.

- -~25 turn limit

- -No hooks or MCP access

- -Shares parent context window

- -No compaction possible

- -Limited tool access

- +Unlimited turns (own session)

- +Full hooks + MCP + Skills

- +Own 1M context window

- +Compaction works normally

- +Complete Claude Code instance

How Does Claude Code tmux Orchestration Work?

The architecture has three components: an orchestrator session, a bash heartbeat script, and worker sessions. All coordination happens through files in an _orchestrator/ directory - no custom servers, no daemons, no complex IPC.

The orchestrator is your main Claude Code session. It spawns workers, monitors their status, handles escalations, and merges results. The heartbeat script is a simple bash loop that keeps the orchestrator alive between monitoring cycles by sending prompts via tmux send-keys. Workers are independent Claude Code instances running in tmux windows, each with a specific task and namespace isolation.

Claude Code tmux send-keys: The Foundation

The entire architecture depends on one thing: reliably delivering prompts to a running Claude Code session via tmux send-keys. Getting this right took more iteration than I expected.

Three things matter. First, use send-keys -l (literal mode) with a separate Enter keystroke. This avoids shell interpretation issues with special characters in prompts. Second, strip ANSI escape codes before parsing any output from capture-pane - Claude Code's terminal output is full of color codes and cursor movements that break regex matching. Third, implement a .ready handshake so the orchestrator only sends when a worker is actually idle.

Here's the core send pattern:

# Send prompt to worker (literal mode + separate Enter)

tmux send-keys -t "$SESSION_NAME":w1 -l "$PROMPT_TEXT"

sleep 0.5

tmux send-keys -t "$SESSION_NAME":w1 Enter

# Verify delivery via capture-pane

sleep 2

OUTPUT=$(tmux capture-pane -t "$SESSION_NAME":w1 -p | \

sed 's/\x1b\[[0-9;?]*[a-zA-Z]//g') # ANSI strip

# Check if Claude is processing (not idle)

if echo "$OUTPUT" | grep -qE '(Running|thinking)'; then

echo "Prompt delivered successfully"

fi

For multiline prompts (like worker startup instructions), send-keys breaks on newlines. The fix: use tmux load-buffer with paste-buffer:

# Multiline prompt via paste-buffer

echo "$STARTUP_PROMPT" | tmux load-buffer -b "orch-w1" -

tmux paste-buffer -p -d -b "orch-w1" -t "$SESSION_NAME":w1

tmux send-keys -t "$SESSION_NAME":w1 Enter

This lives in primeline-ai/claude-tmux-orchestration - the tmux orchestration layer. Free, MIT, no build step.

How Does the Worker Spawn Sequence Work?

Spawning a worker is a 6-step sequence. Each step needs careful timing - Claude Code takes a few seconds to boot, and rushing the process causes missed prompts.

# 1. Create tmux window

tmux new-window -t "$SESSION_NAME" -n w1

# 2. Start Claude with worker identity

tmux send-keys -t "$SESSION_NAME":w1 -l \

"export ORCHESTRATOR_WORKER_ID=w1 && cd $PROJECT_ROOT && claude --dangerously-skip-permissions"

sleep 0.5 && tmux send-keys -t "$SESSION_NAME":w1 Enter

# 3. Wait for Claude to boot (poll for idle prompt)

for i in $(seq 1 60); do

OUTPUT=$(tmux capture-pane -t "$SESSION_NAME":w1 -p -l 12)

if echo "$OUTPUT" | grep -qE '❯\s*$'; then break; fi

sleep 1

done

# 4. Switch to target model

tmux send-keys -t "$SESSION_NAME":w1 -l "/sonnet"

sleep 0.5 && tmux send-keys -t "$SESSION_NAME":w1 Enter

# 5. Send startup prompt (multiline via paste-buffer)

echo "$WORKER_TEMPLATE" | tmux load-buffer -b "orch-w1" -

tmux paste-buffer -p -d -b "orch-w1" -t "$SESSION_NAME":w1

tmux send-keys -t "$SESSION_NAME":w1 Enter

# 6. Wait for worker to write first status

for i in $(seq 1 60); do

[ -f "_orchestrator/workers/w1.json" ] && break

sleep 1

done

The worker startup template is lightweight on purpose. Workers are full Claude Code instances - they inherit all your project's skills, hooks, and MCP servers. The template just gives them task context, coordination rules, and one critical instruction: ask, don't guess.

Claude Code tmux Hook Matrix: What Workers Inherit

Workers run with ORCHESTRATOR_WORKER_ID set in their environment. Hooks check this variable and adjust behavior in three categories:

Workers inherit memory bootup context from the orchestrator template instead of running it themselves. But security hooks and DSV pulse monitoring stay fully active.

The KEEP category is the important design choice. Workers get the same security tier checks, the same self-correction hooks, the same reasoning hygiene monitoring as the main session. That's the whole point of Tier 3 - these are full sessions, not stripped-down executors.

How Does the Claude Code Orchestrator Cycle Work?

Every monitoring interval, the orchestrator runs a 4-step cycle. Intervals adapt based on worker state: 30 seconds when stuck, 120 seconds during normal operation, 300 seconds when idle.

COLLECT reads each worker's status file and falls back to capture-pane idle detection if a status file is stale. The idle detector checks the last 12 lines for Claude's prompt character, with a spinner override - if Claude is actively thinking, it's not idle even if the prompt is visible.

EVALUATE maps each worker to one of six states: SAFE_TO_RESTART, DO_NOT_INTERRUPT, CONTEXT_LOW_CONTINUE, RATE_LIMITED_WAIT, ERROR_STATE, or UNKNOWN. Only idle workers receive commands. This step also scans inbox/{id}/escalation.json for worker questions - blocking escalations get priority handling.

ACT executes decisions: send reminders, answer escalations, trigger code review when a worker reports "done", or merge results after review passes. The review gate is a hard rule - zero unreviewed worker code lands on main. Workers get up to 3 review iterations before the orchestrator escalates to the user.

LOG writes an audit entry to log.jsonl, updates session state, and touches .ready to signal the heartbeat that the cycle completed.

Real Result: 3 Autonomous Phases

I used this system to run Lucy (a Telegram/Discord assistant) through phases 5-7 autonomously. The orchestrator spawned a worker session, gave it the phase plan, and monitored progress. The worker ran for over an hour - implementing cascading delegation, pairing a second user, and writing 6 iPhone Shortcut guides. It escalated twice (both non-blocking questions about naming conventions), and the review gate caught one issue on the first pass.

Total time saved: roughly 3 hours of context-switching between the three phases. The worker held full context across all of them because it had its own 1M window. With sub-agents, I would have needed 6+ handoffs with context loss at each boundary.

The full tmux orchestration system lives in its own repo: primeline-ai/claude-tmux-orchestration. Open source, MIT licensed. It pairs naturally with Evolving Lite for the memory and delegation layers workers inherit through their full Claude Code session.